- Advertisement -

OpenAI did this with your health data in January. Now it wants your financial data too.

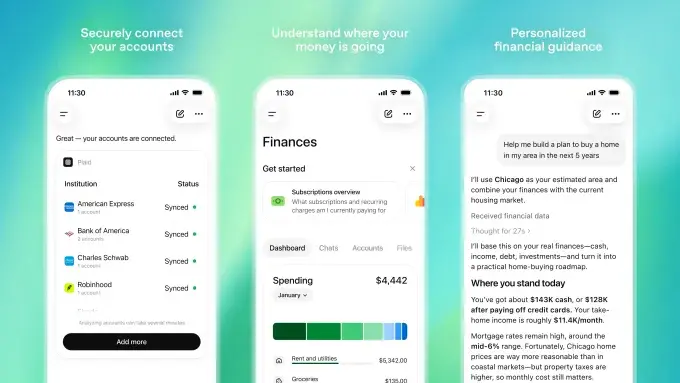

The company announced today that ChatGPT users can connect their bank accounts through Plaid, the financial bridging platform used by 12,000 institutions including Chase, Fidelity, Capital One, and Schwab. Once connected, ChatGPT gets a full view of your balances, transaction history, active subscriptions, investment portfolio, and liabilities like mortgages and credit card debt. In return you get a spending dashboard, personalized financial advice, and a chatbot that can flag unusual changes in your habits.

It’s launching in preview for Pro subscribers at $200 a month. OpenAI says Plus and eventually everyone else comes later.

What it can do and what it can’t

OpenAI is careful about framing the boundaries. ChatGPT cannot make changes to your accounts or see full account numbers. Users can disconnect at any time, delete saved financial memories, and opt out of having their data used for model training.

What it can see is everything else. Your balance. Every transaction. Your stock portfolio. What you owe. And once connected, OpenAI has up to 30 days to delete your data after you disconnect which means pulling the plug isn’t quite as clean as it sounds.

The training opt-in is worth reading carefully too. The default isn’t entirely clear, and “Improve the model for everyone” as the label for sharing your financial data with OpenAI’s training pipeline is doing a lot of friendly framing around a significant decision.

You May Like: OpenAI Wanted Distribution on the iPhone. Apple Had Other Plans.

The pattern

In January OpenAI launched ChatGPT Health, a feature that let users connect medical records for health-related questions. The company was careful to note it wasn’t intended for diagnosis or treatment. The privacy questions that followed, what does OpenAI actually do with this data, how is it protected, what happens if there’s a breach were never fully answered.

Now the same playbook, with your bank account. OpenAI says users have control over their data. What the company doesn’t specify is what OpenAI itself does with that financial information beyond AI training, or whether any additional protections exist against a system breach. That’s the part people are going to keep asking about.

What happens to all this data later

OpenAI is a company that, by its own admission, eventually needs to turn a profit. It now has the potential to build a detailed financial profile of millions of users, spending habits, debt levels, investment behavior, subscription patterns, income signals buried in transaction history.

That’s an extraordinarily valuable dataset. The announcement doesn’t address what guardrails exist around it commercially, what happens to that data if OpenAI’s business model shifts, or what protections survive a potential acquisition or restructuring down the line.

Connecting your bank account to ChatGPT might be genuinely useful. The spending dashboard is a real feature. The financial advice could be good. But OpenAI has now collected your health data and your financial data without clearly answering what a company under commercial pressure does with either of them long term.