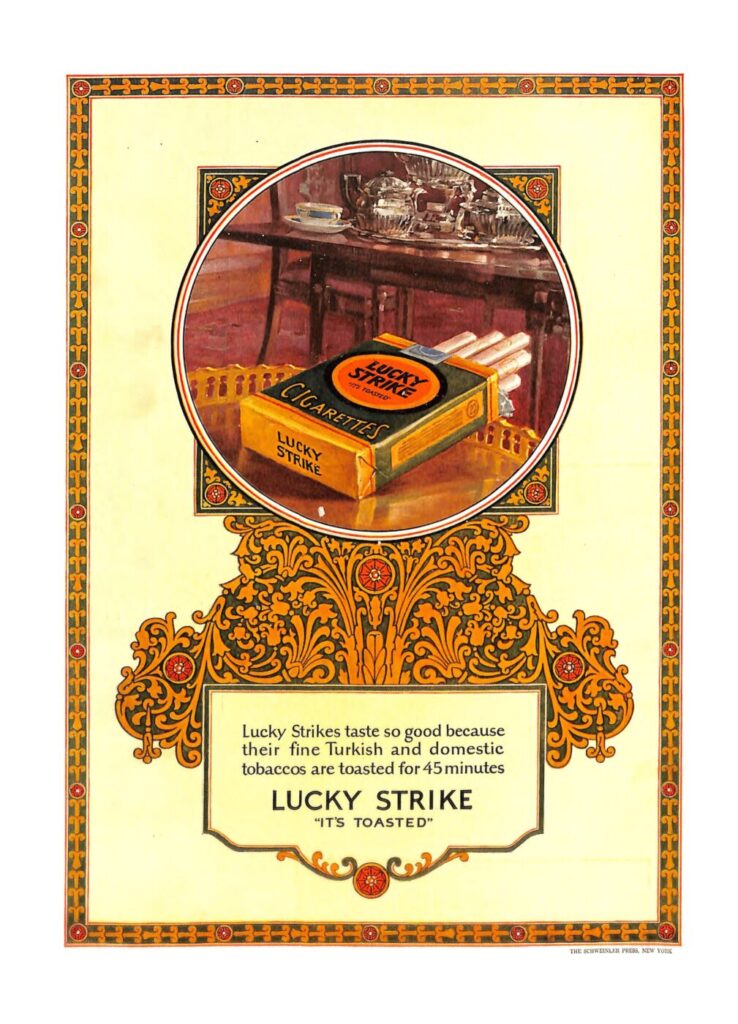

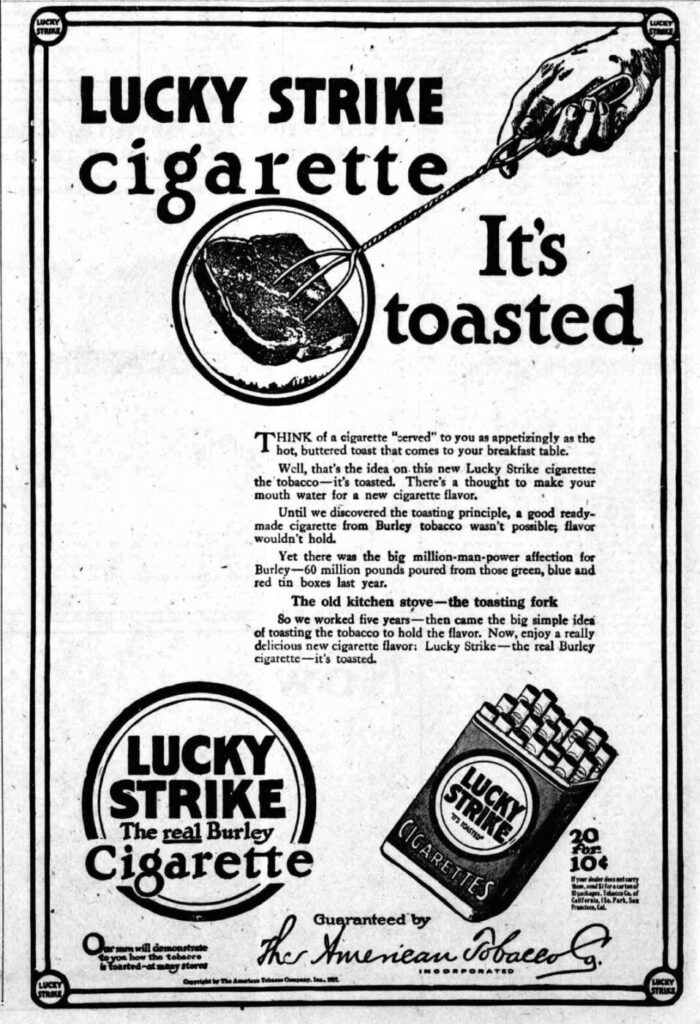

In 1917, American Tobacco launched an ad campaign for its cigarette brand Lucky Strike, with the slogan It’s Toasted. The campaign was designed to address the growing public awareness that cigarettes pose serious health hazards. The heat treatment of tobacco during cigarette manufacturing was a common industrial process, by no means unique to Lucky Strike, but by focusing on it and giving it a name, American Tobacco managed to form the impression that Lucky Strike cigarettes were superior and less harmful than other brands. This gave cigarette smokers an excuse to ignore the risks of smoking, shifting the conversation from the toxicity of the product to the supposed benefits of its preparation. A fictional account of the slogan’s birth was famously featured in the Mad Men pilot episode.

This public relations strategy, which takes a standard industry practice and frames it as a unique, premium benefit to distract from underlying risks, is one of the major ways social media companies, particularly social media giant Meta, address public criticism of their products and their potential impact on users’ mental health, especially among adolescents. This may be an effective public relations move, but in doing so, Meta reinforces the conceptual link between its business and the tobacco industry.

Recent research shows that social media design features like infinite scroll and algorithmic feeds may encourage compulsive use and contribute to anxiety, depression, and social comparison. These concerns are amplified by claims that internal research at Meta identified negative psychological effects but was not always fully disclosed or acted upon, reinforcing public skepticism about platform accountability. A century ago, smoking was normalized. Mounting evidence of harm, industry knowledge of risks, and eventual regulatory action caused a shift in the public perception of cigarettes. While social media use is not directly equivalent to nicotine addiction, both show a pattern in which widespread everyday consumption is later questioned as awareness grows about long-term health effects, particularly on younger populations, and corporate incentives are seen as potentially misaligned with public health standards. Furthermore, just like cigarette manufacturers, social media platforms are now being accused by some of knowingly designing harmful, addictive products and marketing them to vulnerable teens. In December 2025, Australia banned social media for children under 16. Many other countries are considering or have announced similar bans and restrictions. Some US states are mandating black box warning labels with this kind of language:

“The Surgeon General has warned that while social media may have benefits for some young users, social media is associated with significant mental health harms and has not been proven safe for young users.”

Last month, Meta and Google’s YouTube were found negligent in a case, which was brought by a young woman identified as Kaley, who claimed that design features in their addictive products led to her mental health distress. Thousands of similar lawsuits against social media companies are pending in US courts, and this decision will influence many that are expected to go to trial this year. Meta CEO Mark Zuckerberg testified during the trial. In his testimony, he argued that platform safety features were implemented appropriately and that Meta has invested heavily in youth safety measures. This focus on investing in safety features is a general Meta PR strategy evident across its websites and public statements. In a 2024 US Senate hearing, Zuckerberg told senators that Meta has 40,000 people working in its trust and safety division. He apologized to parents who say Instagram contributed to their children’s suicides or exploitation:

“I’m sorry for everything you’ve all gone through. It’s terrible. No one should have to go through the things that your families have suffered…this is why we invest so much and are going to continue doing industry-leading efforts to make sure that no one has to go through the types of things that your families have had to suffer.”

Mark Zuckerberg may have been sincere in his apology, and Meta is undoubtedly investing in safety measures, which, according to Meta, include automated and human content moderation, age verification, parental supervision features, and awareness campaigns. These are measures that are not just cosmetic; they can improve social media safety. However, the repeated focus on investing in safety measures is essentially the It’s Toasted strategy in action.

When Meta emphasizes safety measures, it shifts the focus away from the design of its potentially harmful products towards the process of social platform management. Meta is responding to public criticism by saying it is making “industry-leading efforts” to create a safe environment for its users. It is not saying that most of these safety measures are required by legislation, such as the EU’s Digital Services Act, or are standard industry practice. It is not saying that running a social media empire, with more than 3.5 billion daily active users, cannot be done without a very large investment in content moderation and other policy enforcement measures. Meta must employ tens of thousands of moderators to prevent its platforms from being flooded by illegal content that would alienate advertisers. It is engaging in distraction tactics by taking something essential to its social media operation process and marketing it as a premium feature that can make its products safe.

Meta could work towards redesigning its social media platforms to be less addictive and less potentially harmful. It could eliminate infinite scroll and never-ending content streams in favor of pagination or end-of-feed markers. It could disable video autoplay by default, a feature that can draw users into an infinite content loop. It could prioritize chronological feeds over algorithmic feeds, and, more generally, prioritize relationships over engagement, thereby reducing the visibility of viral, controversial, often polarizing, or anxiety-inducing content. It could create interfaces that allow for reflection rather than frictionless, automatic action, such as easy shares and quick reactions. It could remove or hide scoring mechanisms such as follower counts, likes, and view counts, which trigger social comparison and induce anxiety. Finally, and most importantly, it could start to move away from its surveillance-based revenue model towards business models that do not incentivize it to maximize user engagement.

Instead of considering meaningful architectural changes that could make its social media platforms less addictive and less harmful, Meta prefers to focus on reactive safety features, many of which are optional, thereby avoiding responsibility for potential harm to its users and shifting that responsibility to the users themselves. By focusing on parental supervision tools and awareness campaigns, it subtly shifts the burden of safety onto the victim, a maneuver that ignores how deliberately social media architecture is designed to keep users hooked. Meta is taking a page out of Big Tobacco’s PR playbook, telling us that It’s Toasted. By doing that, it is putting itself in the same category as tobacco manufacturers. No wonder legislators are responding with the tools of the fight against smoking—massive lawsuits, bans, and mandated warnings. This is a very bad place for a technology company to be. Meta should now shift direction and make meaningful, proactive design changes to make its products safe, not just toasted.

840 · 0 · May. 1, 2026 · Markets, Policy · All Notes

continue to this week’s featured note: