FFmpeg is truly a multi-tool for media processing. As an industry-standard tool it supports a wide variety of audio and video codecs and container formats. It can also orchestrate complex chains of filters for media editing and manipulation. For the people who use our apps, FFmpeg plays an important role in enabling new video experiences and improving the reliability of existing ones.

Meta executes ffmpeg (the main CLI application) and ffprobe (a utility for obtaining media file properties) binaries tens of billions of times a day, introducing unique challenges when dealing with media files. FFmpeg can easily perform transcoding and editing on individual files, but our workflows have additional requirements to meet our needs. For many years we had to rely on our own internally developed fork of FFmpeg to provide features that have only recently been added to FFmpeg, such as threaded multi-lane encoding and real-time quality metric computation.

Over time, our internal fork came to diverge significantly from the upstream version of FFmpeg. At the same time, new versions of FFmpeg brought support for new codecs and file formats, and reliability improvements, all of which allowed us to ingest more diverse video content from users without disruptions. This necessitated that we support both recent open-source versions of FFmpeg alongside our internal fork. Not only did this create a gradually divergent feature set, it also created challenges around safely rebasing our internal changes to avoid regressions.

As our internal fork became increasingly outdated, we collaborated with FFmpeg developers, FFlabs, and VideoLAN to develop features in FFmpeg that allowed us to fully deprecate our internal fork and rely exclusively on the upstream version for our use cases. Using upstreamed patches and refactorings we’ve been able to fill two important gaps that we had previously relied on our internal fork to fill: threaded, multi-lane transcoding and real-time quality metrics.

Building More Efficient Multi-Lane Transcoding for VOD and Livestreaming

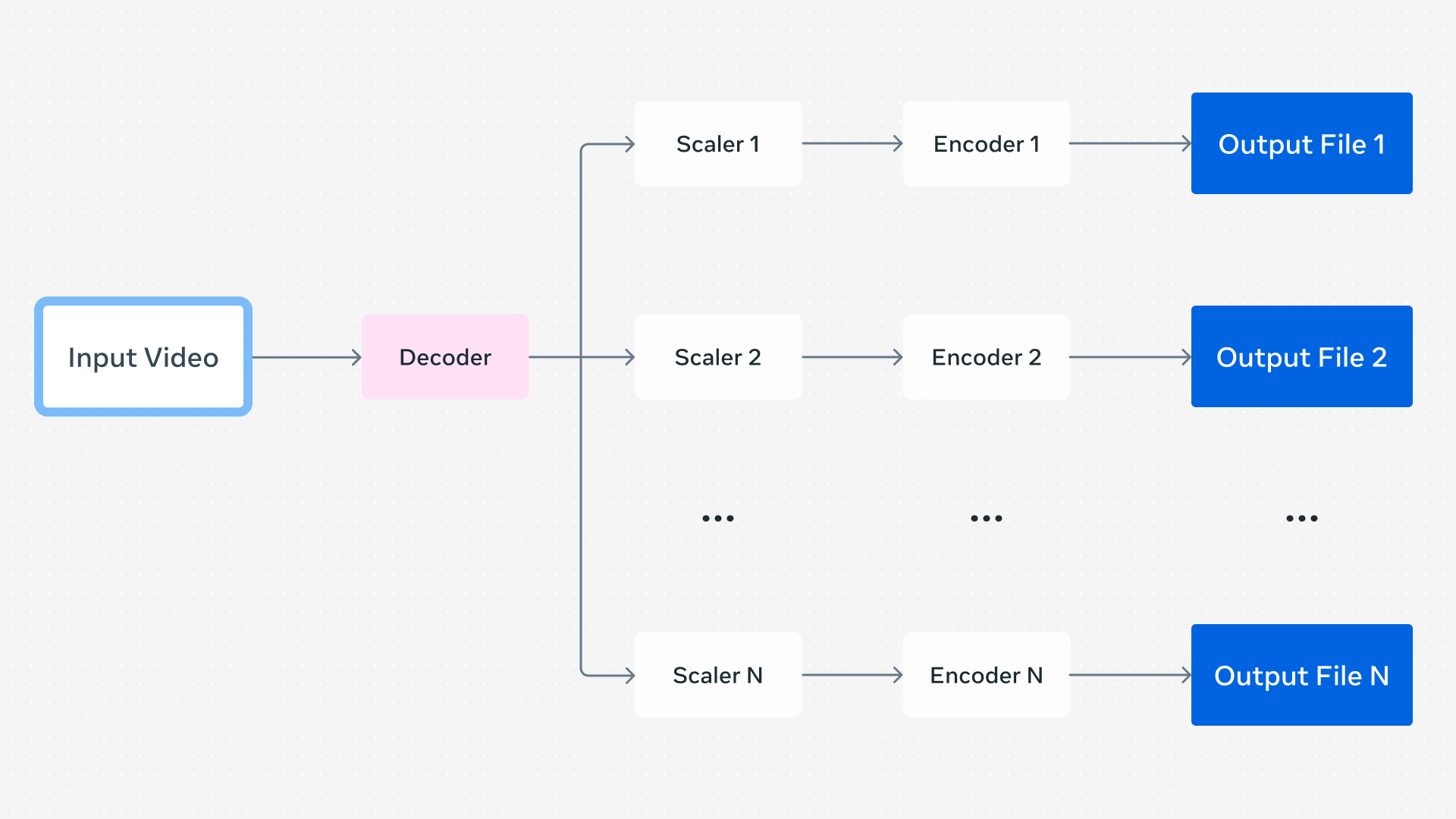

When a user uploads a video through one of our apps, we generate a set of encodings to support Dynamic Adaptive Streaming over HTTP (DASH) playback. DASH playback allows the app’s video player to dynamically choose an encoding based on signals such as network conditions. These encodings can differ in resolution, codec, framerate, and visual quality level but they are created from the same source encoding, and the player can seamlessly switch between them in real time.

In a very simple system separate FFmpeg command lines can generate the encodings for each lane one-by-one in serial. This could be optimized by running each command in parallel, but this quickly becomes inefficient due to the duplicate work done by each process.

To work around this, multiple outputs could be generated within a single FFmpeg command line, decoding the frames of a video once and sending them to each output’s encoder instance. This eliminates a lot of overhead by deduplicating the video decoding and process startup time overhead incurred by each command line. Given that we process over 1 billion video uploads daily, each requiring multiple FFmpeg executions, reductions in per-process compute usage yield significant efficiency gains.

Our internal FFmpeg fork provided an additional optimization to this: parallelized video encoding. While individual video encoders are often internally multi-threaded, previous FFmpeg versions executed each encoder in serial for a given frame when multiple encoders were in use. By running all encoder instances in parallel, better parallelism can be obtained overall.

Thanks to contributions from FFmpeg developers, including those at FFlabs and VideoLAN, more efficient threading was implemented starting with FFmpeg 6.0, with the finishing touches landing in 8.0. This was directly influenced by the design of our internal fork and was one of the main features we had relied on it to provide. This development led to the most complex refactoring of FFmpeg in decades and has enabled more efficient encodings for all FFmpeg users.

To fully migrate off of our internal fork we needed one more feature implemented upstream: real-time quality metrics.

Enabling Real-Time Quality Metrics While Transcoding for Livestreams

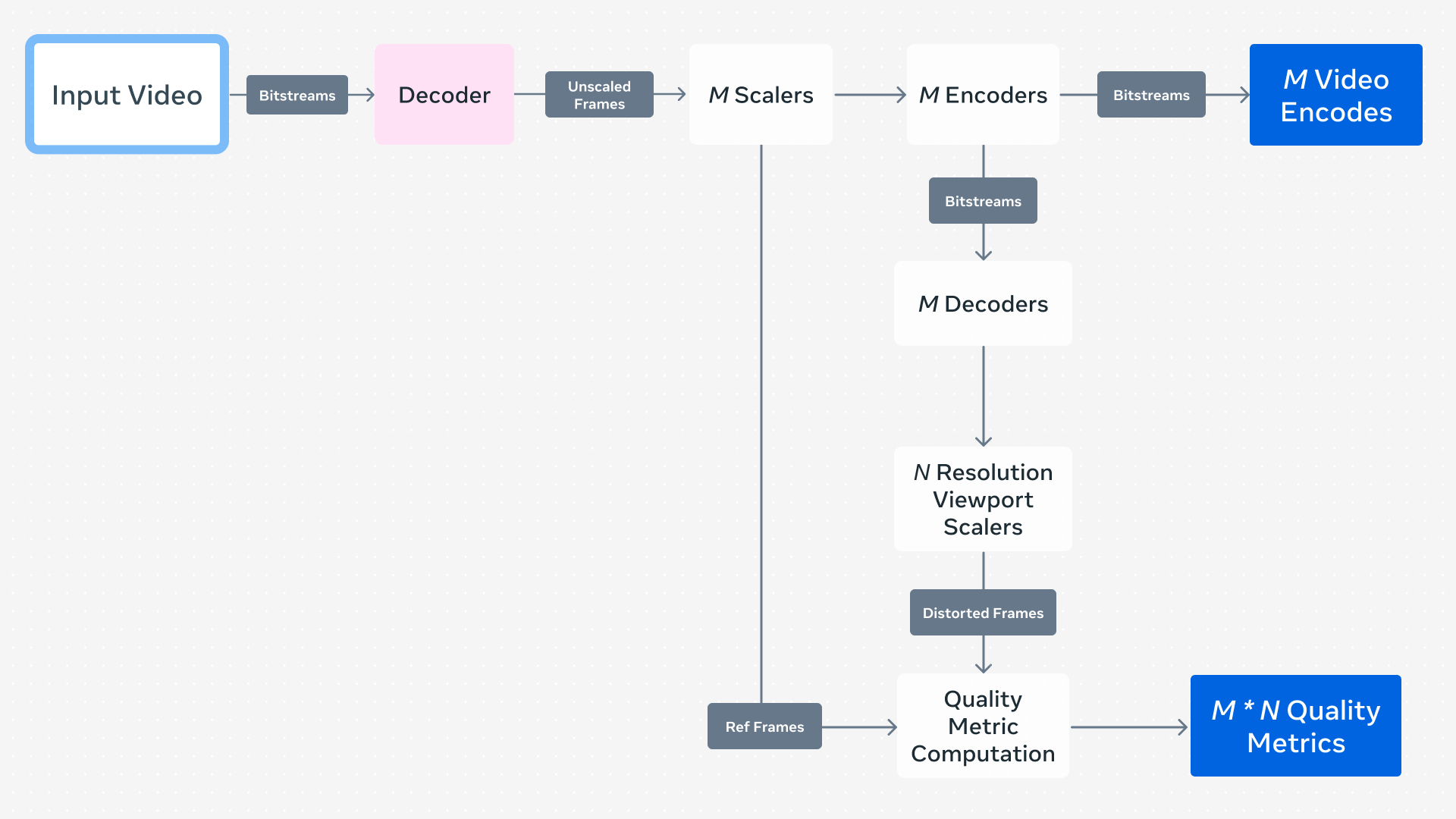

Visual quality metrics, which give a numeric representation of the perceived visual quality of media, can be used to quantify the quality loss incurred from compression. These metrics are categorized as reference or no-reference metrics, where the former compares a reference encoding to some other distorted encoding.

FFmpeg can compute various visual quality metrics such as PSNR, SSIM, and VMAF using two existing encodings in a separate command line after encoding has finished. This is okay for offline or VOD use cases, but not for livestreaming where we might want to compute quality metrics in real time.

To do this, we need to insert a video decoder after each video encoder used by each output lane. These provide bitmaps for each frame in the video after compression has been applied so that we can compare against the frames before compression. In the end, we can produce a quality metric for each encoded lane in real time using a single FFmpeg command line.

Thanks to “in-loop” decoding, which was enabled by FFmpeg developers including those from FFlabs and VideoLAN, beginning with FFmpeg 7.0, we no longer have to rely on our internal FFmpeg fork for this capability.

We Upstream When It Will Have the Most Community Impact

Things like real-time quality metrics while transcoding and more efficient threading can bring efficiency gains to a variety of FFmpeg-based pipelines both in and outside of Meta, and we strive to enable these developments upstream to benefit the FFmpeg community and wider industry. However, there are some patches we’ve developed internally that don’t make sense to contribute upstream. These are highly specific to our infrastructure and don’t generalize well.

FFmpeg supports hardware-accelerated decoding, encoding, and filtering with devices such as NVIDIA’s NVDEC and NVENC, AMD’s Unified Video Decoder (UVD), and Intel’s Quick Sync Video (QSV). Each device is supported through an implementation of standard APIs in FFmpeg, allowing for easier integration and minimizing the need for device-specific command line flags. We’ve added support for the Meta Scalable Video Processor (MSVP), our custom ASIC for video transcoding, through these same APIs, enabling the use of common tooling across different hardware platforms with minimal platform-specific quirks.

As MSVP is only used within Meta’s own infrastructure, it would create a challenge for FFmpeg developers to support it without access to the hardware for testing and validation. In this case, it makes sense to keep patches like this internal since they wouldn’t provide benefit externally. We’ve taken on the responsibility of rebasing our internal patches onto more recent FFmpeg versions over time, utilizing extensive validation to ensure robustness and correctness during upgrades.

Our Continued Commitment to FFmpeg

With more efficient multi-lane encoding and real-time quality metrics, we were able to fully deprecate our internal FFmpeg fork for all VOD and livestreaming pipelines. And thanks to standardized hardware APIs in FFmpeg, we’ve been able to support our MSVP ASIC alongside software-based pipelines with minimal friction.

FFmpeg has withstood the test of time with over 25 years of active development. Developments that improve resource utilization, add support for new codecs and features, and increase reliability enable robust support for a wider range of media. For people on our platforms, this means enabling new experiences and improving the reliability of existing ones. We plan to continue investing in FFmpeg in partnership with open source developers, bringing benefits to Meta, the wider industry, and people who use our products.

Acknowledgments

We would like to acknowledge contributions from the open source community, our partners in FFlabs and VideoLAN, and many Meta engineers, including Max Bykov, Jordi Cenzano Ferret, Tim Harris, Colleen Henry, Mark Shwartzman, Haixia Shi, Cosmin Stejerean, Hassene Tmar, and Victor Loh.