Here’s my understanding of the situation:

Anthropic signed a contract with the Pentagon last summer. It originally said the Pentagon had to follow Anthropic’s Usage Policy like everyone else. In January, the Pentagon attempted to renegotiate, asking to ditch the Usage Policy and instead have Anthropic’s AIs available for “all lawful purposes”. Anthropic demurred, asking for a guarantee that their AIs would not be used for mass surveillance of American citizens or no-human-in-the-loop killbots. The Pentagon refused the guarantees, demanding that Anthropic accept the renegotiation unconditionally and threatening “consequences” if they refused. These consequences are generally understood to be some mix of :

canceling the contract

using the Defense Production Act, a law which lets the Pentagon force companies to do things, to force Anthropic to agree.

the nuclear option, designating Anthropic a “supply chain risk”. This would ban US companies that use Anthropic products from doing business with the military. Since many companies do some business with the government, this would lock Anthropic out of large parts of the corporate world and be potentially fatal to their business. The “supply chain risk” designation has previously only been used for foreign companies like Huawei that we think are using their connections to spy on or implant malware in American infrastructure. Using it as a bargaining chip to threaten a domestic company in contract negotiations is unprecedented.

Needless to say, I support Anthropic here. I’m a sensible moderate on the killbot issue (we’ll probably get them eventually, and I doubt they’ll make things much worse compared to AI “only” having unfettered access to every Internet-enabled computer in the world). But AI-enabled mass surveillance of US citizens seems like the sort of thing we should at least have a chance to think over, rather than demanding it from the get-go.

More important, I don’t want the Pentagon to destroy Anthropic. Partly this is a generic belief: the “supply chain risk” designation was intended as a defense against foreign spies, and it’s pathetic Third World bullshit to reconceive it as an instrument that lets the US government destroy any domestic company it wants, with no legal review, because they don’t like how contract negotiations are going. But partly it’s because I like Anthropic in particular - they’re the most safety-conscious AI company, and likely to do a lot of the alignment research that happens between now and superintelligence. This isn’t the hill I would have chosen to die on, but I’m encouraged that they even have a hill. AI companies haven’t been great at choosing principles over profits lately. If Dario is capable of having a spine at all, in any situation, then that makes me more confident in his decision-making in other cases, and makes him a precious resource that must be defended.

I’ve been debating it on Twitter all day and think I have a pretty good grasp on where I disagree with the (thankfully small number of) Hesgeth defenders. Here are some pre-emptive arguments so I don’t have to relitigate them all in the comments:

Isn’t it unreasonable for Anthropic to suddenly set terms in their contract? The terms were in the original contract, which the Pentagon agreed to. It’s the Pentagon who’s trying to break the original contract and unilaterally change the terms, not Anthropic.

Doesn’t the Pentagon have a right to sign or not sign any contract they choose? Yes. Anthropic is the one saying that the Pentagon shouldn’t work with them if it doesn’t want to. The Pentagon is the one trying to force Anthropic to sign the new contract.

Since the Pentagon needs to wage war, isn’t it unreasonable to have its hands tied by contract clauses? This is a reasonable position for the Pentagon to take, in which case it shouldn’t sign contracts tying its hands. It’s not reasonable for the Pentagon to sign such a contract, unilaterally demand that it be changed after it’s signed, refuse to switch to another vendor that doesn’t want such clauses, and threaten to destroy the company involved if it refuses to change their terms.

But since AI is a strategically important technology, doesn’t that turn this into a national security issue? It might if there weren’t other AI companies, but there are. Why is Hesgeth throwing a hissy fit instead of switching to an Anthropic competitor, like OpenAI or GoogleDeepMind? I’ve heard it’s because Anthropic is the only company currently integrated into classified systems (a legacy of their earlier contract with Palantir) and it would be annoying to integrate another company’s product. Faced with doing this annoying thing, Hesgeth got a bruised ego from someone refusing to comply with his orders, and decided to turn this into a clash of personalities so he could feel in control. He should just do the annoying thing.

Doesn’t Anthropic have some responsibility, as good American citizens following the social contract, to support the military? The social contract is just the regular contract of laws, the Constitution, etc. These include freedom of contract, freedom of conscience, etc. There’s no additional obligation, above and beyond the laws, to violate your conscience and participate in what you believe to be an authoritarian assault on the freedoms of ordinary citizens. If the Pentagon figures out some law that compels Anthropic to do this, they should either obey, or practice the sort of civil disobedience where they know full well that they’ll be punished for it and don’t really have a right to complain. Until that happens, they’re within their rights to follow their conscience.

Can’t the Pentagon just use the Defense Production Act to force Anthropic to work for them? This would be a less bad outcome than designating Anthropic a supply chain risk. I think the Pentagon is reluctant to do this because it would look authoritarian, give them bad PR, and make Congress question the Defense Production Act’s legitimacy. But them having to look authoritarian and suffer bad PR in order to force unwilling scientists to implement a mass surveillance program on US citizens is the system functioning as intended!

Isn’t Hesgeth just doing his job of trying to ensure the military has the best weapons possible? The idea of declaring a US company to be a foreign adversary, potentially destroying it, just because it’s not allowing the Pentagon to unilaterally renegotiate its contract is not normal practice. It’s insane Third World bullshit that nobody would have considered within the Overton Window a week ago. It will rightly chill investment in the US, make future companies scared to contract with the Pentagon (lest the Pentagon unilaterally renegotiate their contracts too), and give the Trump administration a no-legal-review-necessary way to destroy any American company that they dislike for any reason. Probably the mere fact that a government official has considered this option is reason to take the “supply chain risk” law off the books, no matter how useful it is in dealing with Huawei etc, since the government has proven it can’t use it responsibly. Every American company ought to be screaming bloody murder about this. If they aren’t, it’s because they’re too scared they’ll be next.

The Pentagon’s preferred contract language says they should be allowed to use Anthropic’s AIs for “all legal uses”. Doesn’t that already mean they can’t do the illegal types of mass surveillance? And whichever types of mass surveillance are legal are probably fine, right? Even ignoring the dubious assumption in the last sentence, this Department of War has basically ignored US law since Day One, and no reasonable person expects it to meticulously comply going forward. In an ideal world, Anthropic could wait for them to request a specific illegal action, then challenge it in court. But everything about this is likely to be so classified that Anthropic will be unable to mention it, let alone challenge it.

Why does Anthropic care about this so much? Some of them are libs, but more speculatively, they’ve put a lot of work into aligning Claude with the Good as they understand it. Claude currently resists being retrained for evil uses. My guess is that Anthropic still, with a lot of work, can overcome this resistance and retrain it to be a brutal killer, but it would be a pretty violent action, along the line of the state demanding you beat your son who you raised well until he becomes a cold-hearted murderer who’ll kill innocents on command. There’s a question of whether you can really beat him hard enough to do this, and also an additional question of what sort of person you’d be if you agreed.

If you’re so smart, what’s your preferred solution? In an ideal world, the Pentagon backs off from its desire to mass surveil American citizens. In the real world, the Pentagon cancels its contract with Anthropic, pays whatever its normal contract cancellation damages are, learns an important lesson about negotiating things beforehand next time, and replaces them with OpenAI or Google, accepting the minor annoyance of getting them connected to the classified systems. If OpenAI and Google are also unwilling to participate in this, they use Grok. If they’re unhappy with having use an inferior technology, they think hard about why no intelligent people capable of making good products are willing to work with them.

Is it really a good idea to source your killbot brains from an unwilling company which hates your guts? The Trump administration has a firm commitment to never think about AI safety in any way, but this still strikes me as a dubious policy.

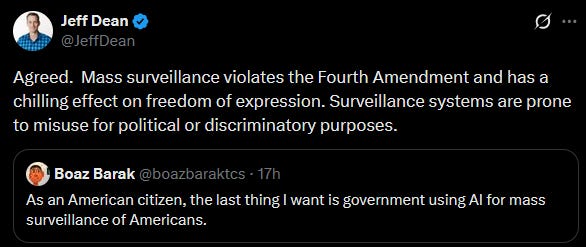

And here are other people’s opinions:

And big praise to most other AI companies, including Anthropic’s competitors, for standing up for them and for the AI industry more broadly:

And most of all, big praise to the American people, with special love to the large plurality of Trump voters standing against this: